Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical IntelligenceThe two-year-old, San Francisco-based robotics startup that has quietly become one of the Bay Area’s most watched AI companies, published new research Thursday to demonstrate that its latest model can direct robots to perform tasks they have never been trained in in detail — something the company’s researchers say surprised them.

The new model, called π0.7, represents what the company describes as an early but effective way to reach the long-sought goal of a full-time robot brain: One that can be pointed at an obscure task, trained in simple language, and pulled. If the findings hold up to scrutiny, they suggest that robotic AI may be approaching a turning point similar to the one the field has seen with large forms of language — where capabilities begin to expand in ways that go beyond what was previously apparent.

But first: The main focus of the paper is integration – the ability to combine skills learned in different areas to solve problems that the brand has never faced before. Until now, the best way to train robots has been rote learning – collect data for a specific task, train a technical model on that data, then repeat for each new task. π0.7, Physical Intelligence says, breaks this order.

“Once you get past the point where it’s just doing exactly what you collect to reengineer things in new ways,” says Sergey Levine, co-founder of Physical Intelligence and a UC Berkeley professor who focuses on robotics AI, “the potential goes way up in proportion to the amount of things we see, what we see is what we like. Like language and vision.”

The most interesting feature of this paper is the gas burner that the model has not seen in training. When the research team investigated, they found only two important parts in the entire training group: One where a different robot simply pushed the air conditioner to close, and another from an open position where another robot placed a plastic bottle inside it at the instruction of another. The brand created a different set of pieces, including a lot of online learning, to understand how the device works.

“It’s very difficult to track where the information is coming from, or where it will succeed or fail,” says Lucy Shi, a Pi computer science researcher and Stanford Ph.D. student. However, in training zero, the model tried to use the device to cook potatoes. With step-by-step instructions — essentially, a human walking the robot through the process of how to explain something to a new employee — it worked.

The training capability is important because it shows that robots can be deployed in new environments and controlled in real time without data collection or model processing.

So what does that mean? The researchers are not shy about the model’s weaknesses and are careful not to create their own. At one point they point the finger at their team.

“Sometimes the failure is not in the robot or in the model,” says Shi. “It’s up to us. Not to be an engineering genius in a hurry.” He describes an early experiment with air-burning that resulted in a 5% success rate. After spending about half an hour working on how the project was described for the model, it jumped to 95%, he says.

The model was no longer able to perform complex multi-step operations independently from a single high-level command. “You can’t say, ‘Hey, go make me some toast,'” says Levine. “But if you go through it — ‘on the toaster, open this part, push the button, do this’ — then it works.”

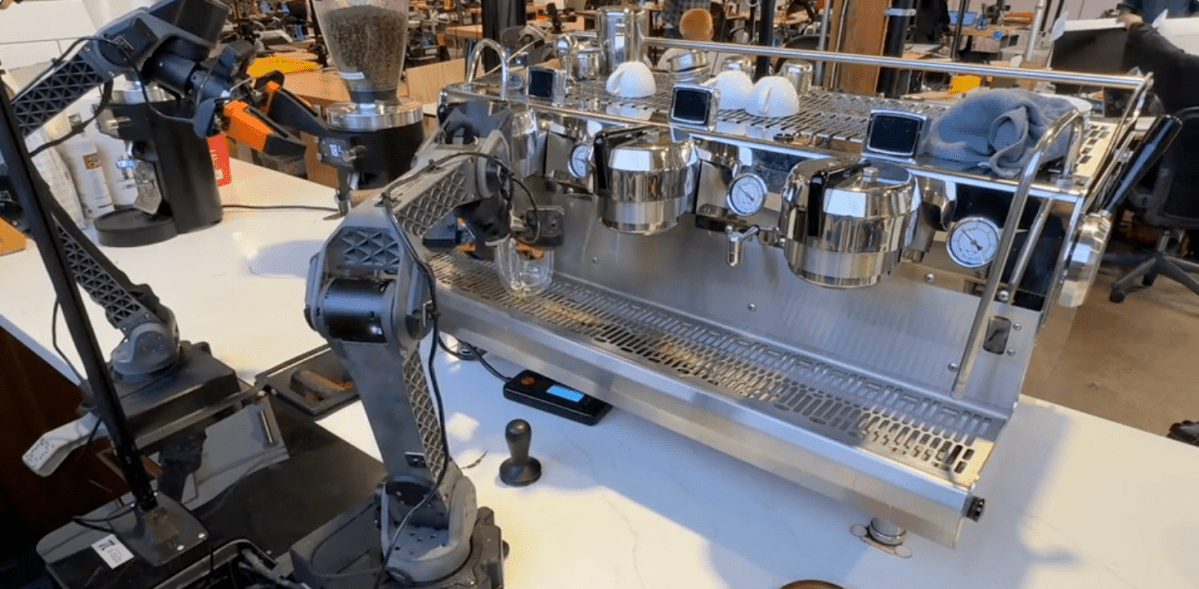

The team also acknowledged that standard robotics benchmarks do not exist, making external validation of their claims difficult. Instead, the company measured π0.7 against its older models — generalist machines trained for individual tasks — and found that the generalist model was compatible with a variety of complex tasks, including making coffee, drying clothes, and collecting boxes.

What may be most notable about this study – if you take the researchers at their word – is not just one demonstration but the degree to which the results surprised them, people whose job is to know exactly what is in the training data and why the model should be suitable and what it should not do.

“What I’ve always found is that when I get a deep understanding of the data, I can only imagine what the model will do,” says Ashwin Balakrishna, a research scientist at Physical Intelligence. But a few months ago was the first time I was so surprised that I just bought a random gear and asked the robot, ‘Hey, can you turn the gear?’ And it just helped.”

Levine recalled when researchers first encountered GPT-2 making news unicorns in the Andes. “Where did he learn about unicorns in Peru?” He says. “It’s an amazing combination. And I think seeing that in robotics is really special.”

Naturally, critics will point to a critical asymmetry here: Linguistics had the entire Internet to learn from. Robots don’t, and no amount of intelligent coercion closes the gap. But when asked where he expects the skepticism, Levine points elsewhere entirely.

“The objection that can always be leveled against any kind of robotic generalization is that the tasks are boring,” he says. “The robot doesn’t do backtracking.” He pushes back on the design, arguing that the difference between the impressive display of a robot and the robotic system that creates reality is the point. A brief description, he points out, always seems less obvious than a careful sketch – but it’s much more effective.

The paper itself uses conservative language throughout, describing π0.7 as showing “first signs” of stability and “first indications” of new possibilities. This is the result of a study, not a prescribed medicine.

When asked specifically when a system based on these findings might be ready to be deployed in the real world, Levine refuses to speculate. “I think there’s good reason to be optimistic, and it’s certainly progressing faster than I expected a few years ago,” he says. But it is very difficult for me to answer this question.

Physical Intelligence has raised more than $1 billion to date and was recently valued at $5.6 billion. A big part of the financial excitement around the company comes from Lachy Groom, a co-founder who spent many years as one of Silicon Valley’s best-known angel investors – backing Figma, Notion, and Ramp, among others – before deciding that Physical Intelligence was the company he was looking for. This line has helped startups attract institutional funding even if they refuse to commit to advertising time.

The company is now said to be discussing a new round that could double that figure $11 billion. The group declined to comment.