Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

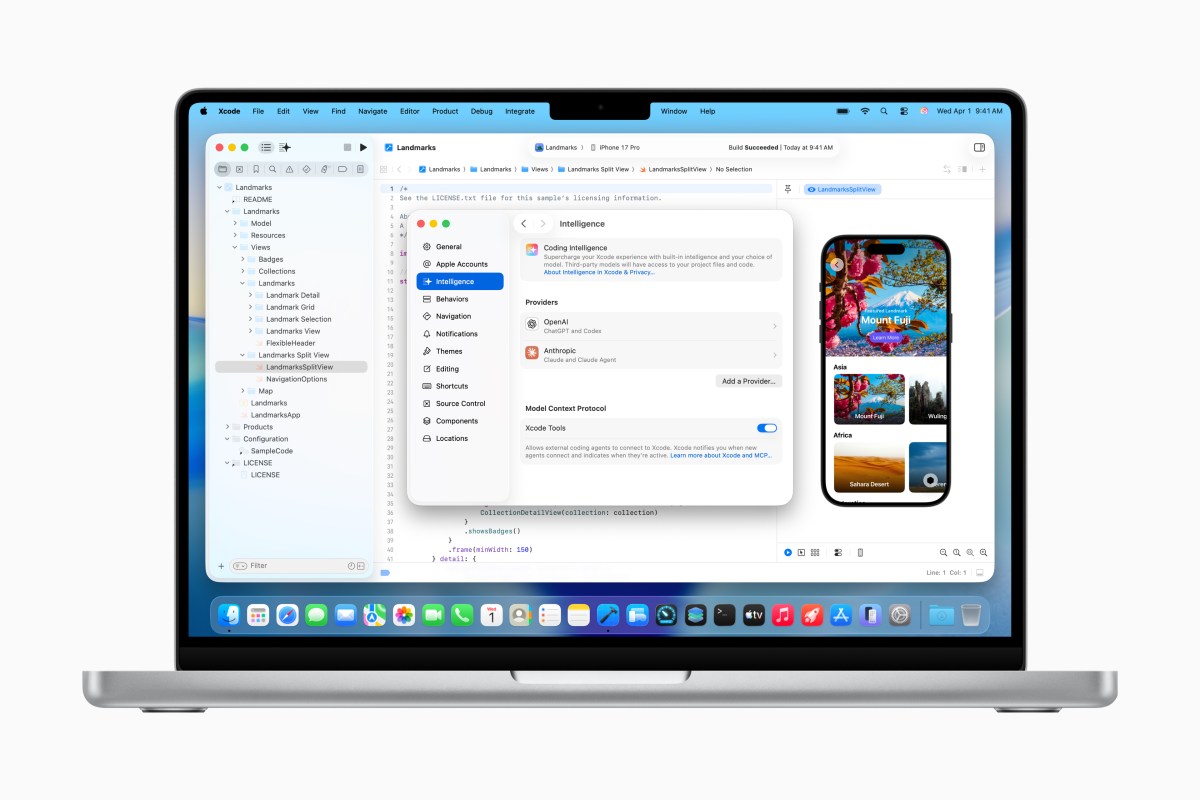

Apple is bringing scripting to Xcode. On Tuesday, the company he announced the release of Xcode 26.3, which will allow developers to use the operating system, including Anthropic’s Claude Agent and OpenAI’s Codexdirectly in Apple’s official app development suite.

The Xcode 26.3 Release Candidate is available to all Apple developers today from the development site and will arrive on the App Store in due course.

This latest update comes on the heels of Xcode 26 was released last yearwhich introduced support for ChatGPT and Claude within Apple’s integrated development environment (IDE) used by software developers for iPhone, iPad, Mac, Apple Watch, and other Apple platforms.

The integration of agetic tools allows AI models to tap into Xcode’s many features to perform their tasks and create more complex systems.

The brands will also have access to the latest Apple documentation to ensure they use the latest APIs and follow best practices when building.

At launch, agents can help developers explore their project, understand its structure and metadata, then build the project and run tests to see if there are any bugs and fix them, if any.

In preparation for this launch, Apple said it worked closely with Anthropic and OpenAI to develop the new technology. In particular, the company said that it has done a lot of work to optimize the use of tokens and tool calls, so that agents can run smoothly in Xcode.

Xcode supports MCP (Model Context Protocol) to expose its capabilities to agents and connect them to its tools. This means that Xcode can now work with any third-party MCP supporter for things like project discovery, editing, file management, previews and shortcuts, and getting the latest version of the code.

Developers who want to test the agentic coding feature should first download the agents they want to use in the Xcode settings. They can also link their accounts with AI agents by signing in or adding their API key. A drop-down menu within the program allows developers to select the version they want to use (eg GPT-5.2-Codex vs. GPT-5.1 mini).

In the box on the left side of the screen, developers can tell the assistant what kind of project they want to create or change to the code they want to create using natural language commands. For example, they can control Xcode to add a feature to their app that uses one of the frameworks provided by Apple, and how it should look and work.

When the agent starts working, it breaks the work into smaller blocks, so it’s easier to see what’s going on and how the code is changing. It will also check the documents it needs before it starts copying. The changes are analyzed visually within the code, and project documentation on the side of the screen allows developers to learn what is happening under the hood.

This exposure will especially help new developers who are learning to code, Apple believes. To achieve this, the company is hosting “code-together” meeting on Thursday on the developer site, where users can see and learn how to use the recording tools while writing in real time with their Xcode.

At the end of its process, the AI assistant verifies that the code it created works as expected. With the results of his tests in front of him, the assistant can repeat the project if there is a need to correct errors or other problems. (Apple has found that asking the agent to think about their plans before entering a code can sometimes help improve the process, because it forces the agent to plan ahead.)

In addition, if the developers are not happy with the result, they can easily revert their code at any time, since Xcode generates key events every time the assistant changes.