Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Lily Jamali,North America Technology Correspondent, San Franciscoand

Tiffany Turnbull,Sydney

Getty Images

Getty ImagesWhen Stephen Scheeler became CEO of Facebook Australia in the early 2010s, he was convinced of the power of the internet and social media for the public good.

It will herald a new era of global connectivity and the democratization of learning. It will allow users to build their own public square without traditional gatekeepers.

“When I first joined there was an intoxicating sense of optimism that I think a lot of people around the world felt,” he told the BBC.

But when he left the company in 2017, the seeds of doubt about his work were planted and have blossomed ever since.

“There are a lot of benefits to these platforms, but there are also a lot of downsides,” he speculates.

That view is no longer uncommon as scrutiny of the largest social media companies intensifies around the world. Critics say much of this has focused on teenagers, who have become a lucrative market for extremely wealthy global companies, but at the cost of their mental health and well-being.

Governments from Utah to the European Union have been trying to limit children’s use of social media. But the most radical step yet will come in Australia, where a ban on under-16s has put tech companies in trouble.

Many of the affected social media companies have spent a year loudly protesting the new law, which requires them to take “reasonable steps” to prevent underage users from having accounts on their platforms.

They claim the ban may actually make children less safe, arguing it violates their rights, and repeatedly point to questions about the technology used to enforce the policy.

“Australia’s blanket censorship will leave its young people less informed, less connected and unable to navigate the space they should understand as adults,” said Paul Taske of NetChoice, a trade group representing several big tech companies.

Industry insiders worry Australia’s ban – the first of its kind – could inspire other countries.

“It could be a proof of concept that gets attention around the world,” said Nate Fast, a professor at the University of Southern California’s Marshall School of Business.

Getty Images

Getty ImagesIn recent years, multiple whistleblowers and lawsuits have alleged that social media companies put profits over user safety.

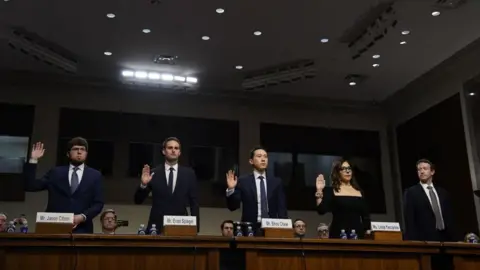

In January, a landmark trial will begin in the United States, with hearings accusing a number of apps, including Meta, TikTok, Snapchat and YouTube, of designing addictive apps and deliberately concealing the harm caused by their platforms. Everyone denies it, but Meta founder Mark Zuckerberg and Snap boss Evan Spiegel admit it Both men were ordered to appear in person to testify.

The case consolidates hundreds of claims from parents and school districts and is one of the first to move forward from a slew of similar lawsuits alleging social media contributes to poor mental health and child exploitation.

In a separate ongoing case, state prosecutors accuse Zuckerberg of personally undermining efforts to improve youth well-being on the company’s platform, including vetoing a proposal to ditch Instagram’s face-changing beauty filters, which experts say can exacerbate body dysmorphia and eating disorders.

Former Meta employees Sarah Wynn-Williams, Frances Haugen and Arturo Béjar testified before the U.S. Congress alleging a series of inappropriate behaviors they observed during their time at the company.

Meta maintains that the company has been working hard to create tools to keep teenagers safe online.

But the broader industry has also recently come under fire for misand disinformation, hate speech and violent content.

Video of Charlie Kirk’s assassination went viral across platforms, even to those who weren’t seeking it. Elon Musk is suing US states over laws requiring social media companies, including X, to define and disclose how they combat hate speech online. Meta came under heavy criticism earlier this year Announcing that fact-checkers will be eliminated Who monitors their platform for misinformation.

A rare bipartisan front has emerged among U.S. lawmakers eager to cut back on tech bosses.

At a hearing last year, Zuckerberg was urged to apologize to the families of the deceased who came to watch in person. Among those in the audience was Tammy Rodriguez, whose 11-year-old daughter Selena committed suicide after being sexually exploited on Instagram and Snapchat.

“That’s why we’re investing so much and we’re going to continue our industry-wide efforts to make sure that no one has to experience what your family has endured,” Zuckerberg said.

However, there has been widespread criticism from many experts, lawmakers, and parents (and even kids) who believe social media companies are avoiding real action and accountability on these issues.

When Australia’s social media ban was considered and enacted, these companies said little publicly.

“Escape from public discussion … will only breed more suspicion and mistrust,” Mr. Scherer said.

But privately, many are trying to listen to the government. Spiegel personally spoke with Australian Communications Minister Anika Wells. She also claimed YouTube had sent globally renowned children’s entertainers The Wiggles to lobby on their behalf.

In carefully worded public statements, some companies have tried to shift blame elsewhere. Meta and Snap both said the operators of the major app stores — namely Apple and Google — should take on age verification responsibilities.

Many people believe the government has overstepped its authority. Parents know best, they say, and they should decide what makes sense for their children when it comes to social media use.

A statement provided to the BBC by Meta said: “While we are committed to meeting our legal obligations, we have always expressed concerns about this law… There is a better way: legislation empowering parents to approve app downloads and verify age, letting families – not the government – decide which apps teenagers can access.”

Asked why her administration disagreed with that reasoning — why anything short of a ban would be unacceptable — Wells said tech companies have had plenty of time to improve their practices.

“They’ve been in this space for 15, 20 years and now they can do it however they want and … it’s not enough.”

She said leaders from other countries felt the same way and had been knocking on her door asking for help, citing the EU, Fiji, Greece and even Malta as examples.

Denmark and Norway have already begun enacting similar laws, and Singapore and Brazil are also watching closely.

“We’re excited to be the first, we’re proud to be the first, and we stand ready to help any other jurisdictions that are trying to do these things,” Wells said.

Pinar Yildirim, a marketing professor at the University of Pennsylvania’s Wharton School, said as Australia’s ban looms, pressure is mounting on the companies to introduce versions of their products that are safer for younger users.

After all, Australia is a major market for social platforms. At a parliamentary hearing in October, Snapchat said it believed it had about 440,000 accounts in the country between the ages of 13 and 15. TikTok says it has about 200,000 accounts under the age of 16, and Meta says it has about 450,000 accounts between Facebook and Instagram.

Experts say they are also keen to ensure they don’t lose out to others in the larger global market.

In July of this year, YouTube announced the launch of artificial intelligence technology that estimates the age of users to identify users under 18 years old and better protect them from harmful content.

Snapchat has a special account for children, which allegedly defaults to security and privacy settings for users aged 13 to 17.

Last year, Meta launched Instagram Teen Accounts, which similarly placed users under 18 in more restrictive privacy and content settings, which Meta said were designed to limit unwanted contacts and exposure to explicit content. This development was accompanied by a massive marketing blitz in the United States.

“If they create a more protected environment for these users, the idea is that that might reduce some of the damage,” Yildirim said.

Critics, however, are not satisfied. A study led by Béjar, one of the Meta whistleblowers, published in September, found that nearly two-thirds of new security tools on Meta’s Instagram Teen account were ineffective.

“The key issue here is that Meta and other social media companies are not substantively addressing the harm that we know teenagers are experiencing,” Behar told the BBC.

Getty Images

Getty ImagesForced to go on the defensive, the companies are trying to show that while they disagree with Australia’s impending ban, they are making a good faith effort to comply with it.

But analysts say they hope the obstacles – including legal challenges, loopholes in children’s technology and any unintended consequences of a ban – will strengthen the case for other countries to oppose such moves.

Professor Fast points out that these companies have “considerable influence on how things go smoothly”.

“(They) have an incentive to take a very strict line on compliance, but make sure they don’t comply so well that every other country says, ‘Great, this works. Let’s do this too’,” Mr Scheele agreed.

Ari Lightman, a marketing professor at Carnegie Mellon University, said fines of up to A$49.5m ($33m, £24.5m) for serious breaches may simply be considered a cost of doing business. “(They are) just a drop in the bucket,” he said, especially for large enterprises eager to capture the next generation of potential users.

Despite concerns over the implementation of the policy, Mr Scherer said he felt now was social media’s “seatbelt moment”.

“Some people will argue that bad regulation is worse than no regulation, and sometimes that’s true, but I think in this case even imperfect regulation is better than no regulation, or better than what we had before,” he said.

“Maybe it will work, maybe it won’t, but at least we’re trying something.”