Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

The Trump administration on Friday launched a legal system for one point of AI in the United States. The plan would put Washington’s power over government AI regulation, which could hamper recent efforts by states to regulate the technology’s use and development.

“This system can only be successful if it is applied uniformly across the United States,” said a White House statement on the plan. “A proliferation of conflicting federal regulations could undermine America’s innovation and our ability to lead the global AI race.”

The plan outlines seven goals that prioritize AI technology, and provides a federal framework that would go beyond strict state regulations. It places a greater responsibility on parents for things like child safety, and it sets soft, non-binding expectations for accountability on the platform.

For example, Congress should require AI companies to use products that “reduce the risk of sexual abuse and harm to children,” but does not specify all possible requirements.

Trump’s plan comes three months after signing the executive order leading federal agencies to challenge state AI laws. The order gave the Commerce Department 90 days to draft a list of “difficult” state AI rules, which could jeopardize states’ eligibility for federal funding such as broadband grants. The organization has not yet published the list.

The order also directed the administration to work with Congress on similar AI legislation. Those visions are coming straight, and they are visible Trump’s original AI strategywhich focused less on risk and more on promoting corporate growth.

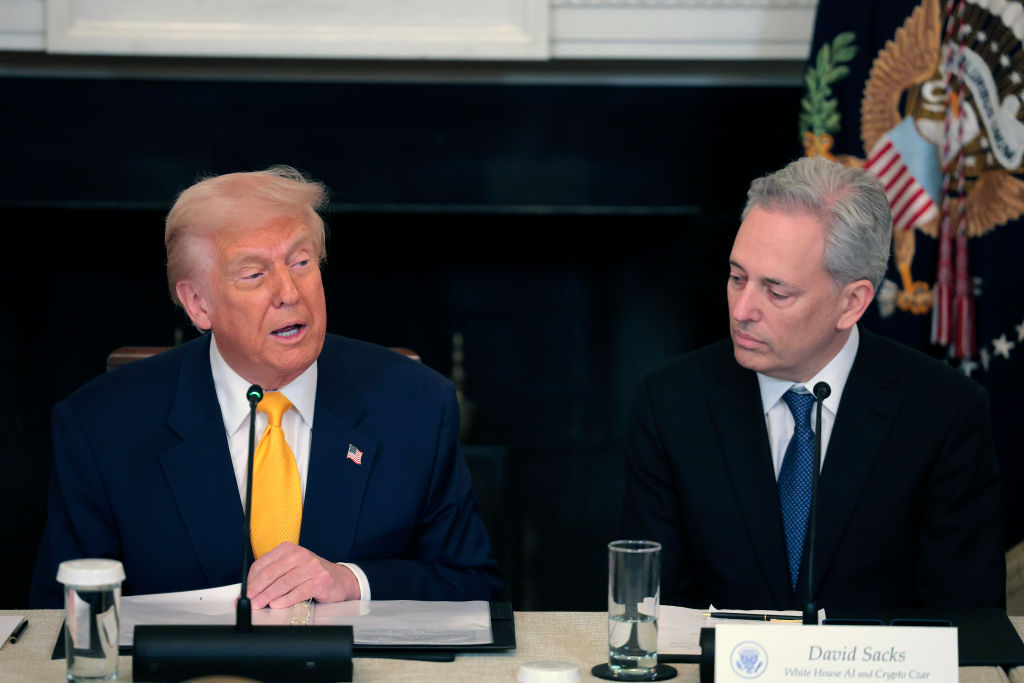

The new plan offers “reduced burdens” that match the government’s desire to “remove old or unnecessary barriers to innovation” and accelerate the adoption of AI in industries. This is a growth-promoting, light-touch approach driven by “runners,” one of whom is White House AI czar and capitalist David Sacks.

Techcrunch event

San Francisco, CA

| |

October 13-15, 2026

Although the policy is federal, the mechanisms for making governments are limited, which only retain their control over all laws such as fraud and child protection, land allocation, and the use of AI in government. It is taking a hard line against countries controlling the development of AI, which it says is a “central issue” linked to national security and foreign policy.

The policy also seeks to prevent countries from “penaliz(ing) AI developers for the unacceptable behavior of another party in relation to their models” – a major shield for developers.

What’s missing from the framework is any feature related to problems, self-monitoring, or ways to prevent potential harm caused by AI. Instead, the plan would centralize AI policymaking in Washington and reduce the likelihood that states will act as regulators of emerging risks.

Critics say states are a quicksand for democracy and have been quick to legislate on impending threats. In particular, New York’s RAISE Act and California SB-53 try to ensure that major AI companies have and follow publicly documented security policies.

“White House AI czar David Sacks continues to call Big Tech hard on hard-working Americans,” said Brendan Steinhauser, CEO of the Alliance for Secure AI. “The AI treaty that governs prevents countries from enacting AI laws and does not provide a way to hold AI developers accountable for the damage they cause.”

Many in the AI industry celebrate this because it gives them more freedom to “innovate” without the threat of regulation.

“This goal is what founders have been asking for: a clear national standard so they can build quickly and at scale,” Teresa Carlson, president of the General Catalyst Institute, told TechCrunch. “Innovators shouldn’t have to follow controversial AI rules that stifle innovation.”

The framework was given at a time when child protection has emerged as a central flashpoint in the AI debate. Other countries have gone the extra mile enforces laws to protect children and to impose more responsibility in the technology industry. Thought leadership points in a different direction, placing more emphasis on parental authority than platform accountability.

“Parents are better equipped to control their children’s social media and behavior,” it said. “The administration is asking Congress to give parents tools to do this effectively, such as account controls to protect their children’s privacy and control their device usage.”

The policy also stated that the administration “believes” that AI platforms should “implement factors that will help reduce child abuse and promote self-harm.” While it wants Congress to require such protections and ensure that existing laws, including bans on child abuse devices, should be applied to AI systems, the proposal uses the qualifiers as “commercially reasonable” and leaves out the requirements.

On the topic of copyright, the framework tries to find a middle ground between the defenders and allow the AI machine to be trained on existing tasks, mentioning the importance of “appropriate use.” These types of languages reflect the conflicts that the AI industry has created as they face a the number of copyright cases on their training data.

Trump’s main AI categories seem to include ensuring that “AI can pursue truth and accuracy without limitations.” In particular, it focuses on avoiding government-directed censorship, rather than monitoring the platform itself.

“Congress should prohibit the United States government from forcing technology providers, including AI agents, to restrict, enforce, or modify public information or ideas,” it reads. It also advises Congress to provide a way for the American people to enforce laws against government agencies that seek to censor the information displayed on AI platforms or order the information provided by an AI platform.

The frame comes as Anthropic to blame government for violating his first amendment rights after the Department of Defense (DOD) he called it supplychain risk. Anthropic says the DOD is making it this way in retaliation for not allowing the military to use its AI tools to monitor Americans or make decisions to target and fire autonomous lethal weapons. Mr Trump called Anthropic and its CEO Dario Amodei “woke” and “very far behind”.

The framework’s language, which emphasizes protecting “politically acceptable speech or dissent,” appears to go beyond Trump. command to look for “woke AI,” which pushed public institutions to adopt practices that were seen as apolitical.

It is not clear what constitutes censorship and content control, so this language can make it difficult for administrators to contact the platform for issues such as disinformation, election interference, or public safety risks.

Samir Jain, vice president of policy at the Center for Democracy and Technology, said: “(The act) clearly states that the government should not force AI companies to ban or change their content on the basis of “partisanship or ideology,” but this summer’s ‘AI’ Executive Order raising AI does exactly that.