Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Floods are one of the world’s most dangerous natural disasters, killing more than 5,000 people every year. They are also among the most difficult to predict. But Google thinks it has cracked the problem in an unexpected way – by reading news.

Although people have collected a lot of information about climate, floods are too short-lived and localized to be measured clearly, while temperature or river flow is monitored over time. That data gap means that deep learning models, which are increasingly used in weather forecasting, can’t predict floods.

To solve this problem, Google researchers used Gemini – Google’s main language – to analyze 5 million news stories around the world, excluding 2.6 million flood reports, and convert those reports into. a geo-tagged timeline it is called “Groundsource.” This is the first time the company has used such languages, according to Gila Loike, product manager for Google Research. Research and data were shared publicly Thursday morning.

It is Groundsource as the real world foundation, the researchers he taught by example built on a Long Short-Term Memory (LSTM) neural network to input global weather forecasts and generate flood forecasts for specific locations.

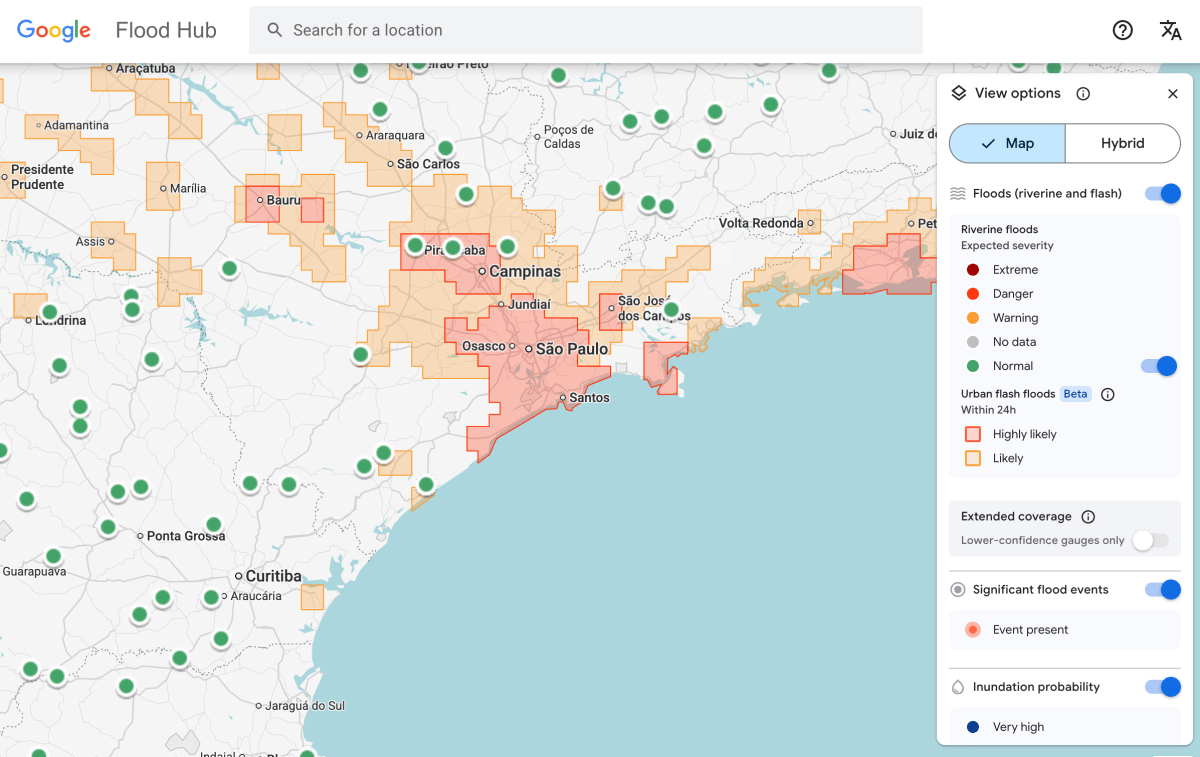

Google’s flood forecast now shows potential risks to cities in the company’s 150 countries. Flood Hub platform, and share its information with emergency response agencies around the world. António José Beeleza, an emergency worker at the Southern African Development Community who tested the predictive model with Google, said it helped his organization respond to floods more quickly.

There are also limitations to the model. One, it is very low, identifying the threat to the 20-square kilometer area. And it’s not as accurate as the US National Weather Service’s flood warning system, in part because Google’s version doesn’t include local radar data, which helps track rainfall in real time.

One of the principles is that the project is designed to work in areas where local governments cannot afford to invest in affordable weather sensing infrastructure or do not have extensive weather records.

Techcrunch event

San Francisco, CA

| |

October 13-15, 2026

“Because we’re aggregating millions of reports, Groundsource documents help to redefine the map,” Juliet Rothenberg, program manager on the Google Resilience team, told reporters this week. “It allows us to expand into areas where there isn’t much.”

Rothenberg said the team hopes that using LLMs to generate more data from documented, better data can be used to try to create data sets related to other ephemeral-but-important-to-predict phenomena, such as heat waves and mudslides.

Marshall Moutenot, CEO of Upstream Technology, a company that uses deep learning models to predict river flows for customers such as hydropower companies, said that Google’s offer is part of an effort to grow the collection of data for deep learning models to predict weather. Moutenot started with them dynamical.orga group managing a collection of machine learning data sets for researchers and developers.

“The lack of data is one of the biggest challenges in geophysics,” Moutenot said. “At one point, there’s a lot of information about the world, and then when you want to analyze the facts, there’s not enough. This was a creative way to get that data.”