Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

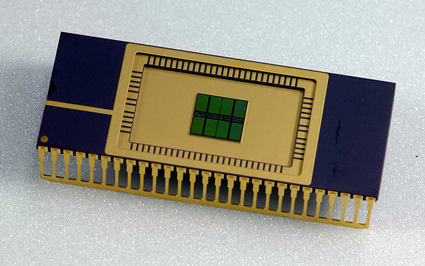

When we talk about the cost of AI infrastructure, it’s often about Nvidia and GPUs – but memory is a very important part of the picture. As hyperscalers plan to create billions of dollars of new data centers, the price of DRAM chips has risen. about 7x in the last year.

At the same time, there is a growing discipline in organizing all that memory to ensure that the right data reaches the right provider at the right time. Companies that know better will be able to ask the same questions with fewer clues, which can be the difference between being wiped out and staying in business.

Semiconductor analyst Doug O’Laughlin is interested in the importance of memory chips for his Substack, where he talks with Val Bercovici, head of AI at Weka. They’re both semiconductor guys, so the focus is more on chips than architecture; The impact of AI software is also significant.

I was very impressed with this passage, in which Bercovici looks at the growing problems Anthropic quick notes:

Let us know if we go to Anthropic’s website for instant pricing. It started as a simple website six or seven months ago, mainly when Claude Code was being implemented – “use caching, it’s cheap.” Now it’s an encyclopedia of exactly how many storage instructions he wrote before buying. You have 5-minute sessions, which are the most common in the industry, or one-hour sessions – and there is no overhead. That is the most important telling. So of course you have all kinds of arbitrage opportunities in the price of cache calculations depending on how many caches you have already bought.

The question is how long does Claude store in the cached memory: You can pay for a 5 minute window, or pay more for a 1 hour window. It is very cheap to draw on the data that is still in the cache, so if you manage it well, you can save a lot. There’s a catch though: Any new data you add to a query may pull something out of the cache window.

This is difficult, but the upgrade is simple: Memory management in AI models will be a big part of AI going forward. Companies that do well will rise to the top.

And there is much progress in this new field. Back in October, I covered it a startup called Tensormesh which was working on a single list known as cache optimization.

Techcrunch event

Boston, MA

| |

June 23, 2026

Opportunities exist in other areas of the stack. For example, download the stack, there is the question of how data centers are using the different types of memory they have. (The discussion includes a good discussion when DRAM chips are used instead of HBM, although it’s too deep in the hardware weeds.) Higher up the stack, end users are thinking about how to configure their arrays to use the shared cache.

As companies get better at playing music, they will use fewer tokens and the idea will become cheaper. Meanwhile, examples are successful in processing each tokenpush the price down too much. As the cost of the server drops, many services that seem out of the question now will become profitable.